Bias Detection in Intelligence Reporting: What Tools Can (& Can’t) Catch

If two well-written reports cite the same sources but reach different conclusions, which one do you trust—and why? In an era where generative AI can both accelerate drafting and amplify error, the invisible risk is not a missing footnote; it’s the bias you don’t catch.

There’s widespread, growing anxiety about manipulated content and deepfakes influencing high‑stakes outcomes, including elections—raising the bar for rigor in intelligence products.

Forms of Bias that Derail Reporting

Experienced analysts face a familiar bind: with the immense amount of data they’re processing, the need for speed collides with the need for defensible insight. Under time pressure, sentiment bias can creep in through tone, selection bias through what gets collected and what doesn’t, and framing bias through narrative choices across languages and media ecosystems. Tooling helps, but it is not a panacea.

Large language models can hallucinate, reinforce bias, or misread cultural context if left unchecked; “black-box” scores without source traceability won’t withstand audit or legal scrutiny. Meanwhile, leaders still expect products that are timely, neutral, and explainable.

Bias shows up in intelligence products in predictable ways that seasoned readers can spot:

Sentiment Bias: evaluative or emotive wording that nudges the reader toward a conclusion.

Selection Bias: over-reliance on sources that share a perspective, language, region, or outlet type while underweighting others.

Framing Bias: structuring the story so that context, alternatives, or uncertainty are minimized.

Analysts also face cognitive traps, like confirmation and availability, each pushing teams to overweight convenient or familiar evidence and under-test competing hypotheses. Disciplined tradecraft and workflow guardrails are the remedy, not one-off fixes.

What Automated Tools Can Reliably Catch

Modern AI and workflow tools are genuinely useful at surfacing bias signals that humans can review, such as:

Language-Level Cues: detection of subjective or emotive phrasing, polarity shifts, and overconfident language that undermine neutrality. Indago’s built-in bias detection flags potential sentiment, confirmation, and selection bias in both source documents and draft text.

Source Integrity Patterns: ownership/affiliation summaries, past credibility controversies, and reliability tagging across a list of inputs (e.g., “Source Reliability & Bias Assessment” workflows). These signals help analysts calibrate trust without collapsing nuance.

Narrative Divergence: automated comparisons of how different media ecosystems frame the same event (e.g., government-aligned vs. independent outlets; cross-language coverage). Consistent divergence or omission patterns can indicate coordinated influence or disinformation themes.

Amplification Anomalies: early-origin detection, bot-like propagation, and copy-paste cascades across social platforms—useful for separating organic discussion from coordinated spread.

Confidence and Provenance: machine-generated confidence estimates and per-claim citation trails that make review faster and more auditable.

These capabilities do not “fix” bias, but they raise review-worthy indicators and attach traceability so teams can adjudicate with context.

Where Automation Falls Short (& Why Human Judgment is Non‑Negotiable)

Human-in-the-loop (HITL) is essential—particularly for political sentiment, risk screens, narrative briefings, and any decision with legal or operational consequence. Analyst review, overrides, and documented rationales are part of trustworthy practice, not afterthoughts.

Bias signals are not verdicts. AI cannot:

Weigh geopolitical, cultural, or legal nuance embedded in wording choices.

Decide proportionality—how much weight a single biased source should carry in a fast-moving brief.

Resolve ambiguity when high-stakes conclusions hang on incomplete or adversarial information.

Replace ethical judgment where civil liberties, attribution, or diplomatic impact are at play.

Embedding Bias Detection into the Workflow

Indago approaches this problem in practice: a workflow‑first platform with a built-in bias detection model that flags potential sentiment, confirmation, and selection bias in sources and report text. The goal is not to “automate objectivity,” but to help your team move faster while preserving the standards your mission demands.

In practice, analysts keep control: the system surfaces bias indicators, but humans decide whether language, sourcing, or balance needs to change—and document that judgment. Here’s how:

Early-Stage Report Framing & Context-Setting: analysts define purpose, audience, and structure; Indago supports multilingual intake and translation while preserving citation paths.

Mid-Stage Report Bias Intervention: the software’s section-level model control and isolated regeneration let teams test alternate framings and quickly compare tone and evidence usage to see which model’s content is optimal for their specific use case.

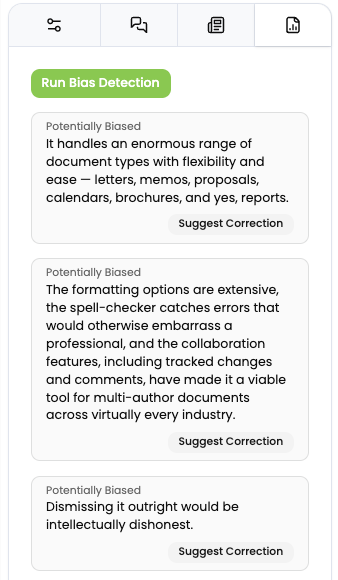

End-Stage Built-In Bias Detection: Indago flags potential sentiment, confirmation, and selection bias in source sets and draft prose. It will clearly highlight its findings with confidence cues, draft neutral language to replace it with, and link back to text spans for human review.

Traceability: per-claim provenance and metadata maintain an audit-ready chain from input to output. This is critical for internal QA, OIG review, or client-facing transparency.

HITL By Design: review checkpoints, confidence scoring, and review flags route risky outputs for human approval. Role-based access and audit logs capture who changed what and why—supporting defensibility.

Practical Checks Teams Can Run Today

Effective bias detection requires understanding intent, context, and consequences, which are areas where human judgment proves irreplaceable. Human analysts excel at triangulating between biased sources to extract objective insights. They can weight the perspectives of different actors based on their positions, motivations, and track records…and notice what information is missing.

Key habits of skilled analysts that significantly reduce bias from the get-go are to:

Diversify Inputs: enforce minimum diversity across outlet type, region, and language for any assessable claim.

Compare Framings: run cross-ecosystem comparisons; note what one set emphasizes that others omit.

Tone Scrub: remove subjectivity and absolutes; prefer evidentiary verbs (e.g., “assessed,” “evaluated,” “corroborated”) and avoid speculative or emotive phrasing.

Confidence and Caveats: attach confidence levels to key judgments and state known gaps.

Re-Run with Counterprompts: ask for the best argument against your working thesis; test whether your selection/weighting still holds.

Indago streamlines these moves with templates for foreign media monitoring, narrative divergence detection, and source reliability/bias assessment—each producing traceable, reviewable outputs.

Conclusion

In high-stakes environments, responsible bias detection is advantageous: it shortens review cycles, strengthens trust with stakeholders, and makes products defensible when challenged without outsourcing judgment to a black box.

If you want to see this in practice—how bias flags, source transparency, section‑level model control, and collaborative review come together without slowing the mission—sign up for a demo to learn more about how Indago can accelerate your intelligence operations.